A.I.O.C.A.P.

Automatic Intelligent Out

of Control Action Proposal

L’A.I.O.C.A.P. is an AI system dedicated to decisional support in order to solve quality and process problems.

Expected results

The focus that comes up by implementing this solution is to improve the performance of problem solving, by immediately detecting the root cause of a certain problem without time waste or breakdowns.

Those are the two main goals of the solution:

Problem Solving

One of the main goals is to support the operator real time in solving a quality problem that’s been encountered during the production process.

This support translates into a series of suggestions given directly and automatically from the system. The suggestion will be based on a probabilistic evaluation of the current status of the process and on a utility Action-State model acknowledged automatically by the system depending on the choices that the operator have made.

Shared Knowledge

Allowing a knowledge transfer from the human resources to the machine, by implementing a sort of machine learning starting from a knowledge base.

By doing that another type of knowledge will be able to be transferred: the one from the more experienced operators to the less experienced ones. By doing so we improve the performances of each single operator.

Ready to… learn more?

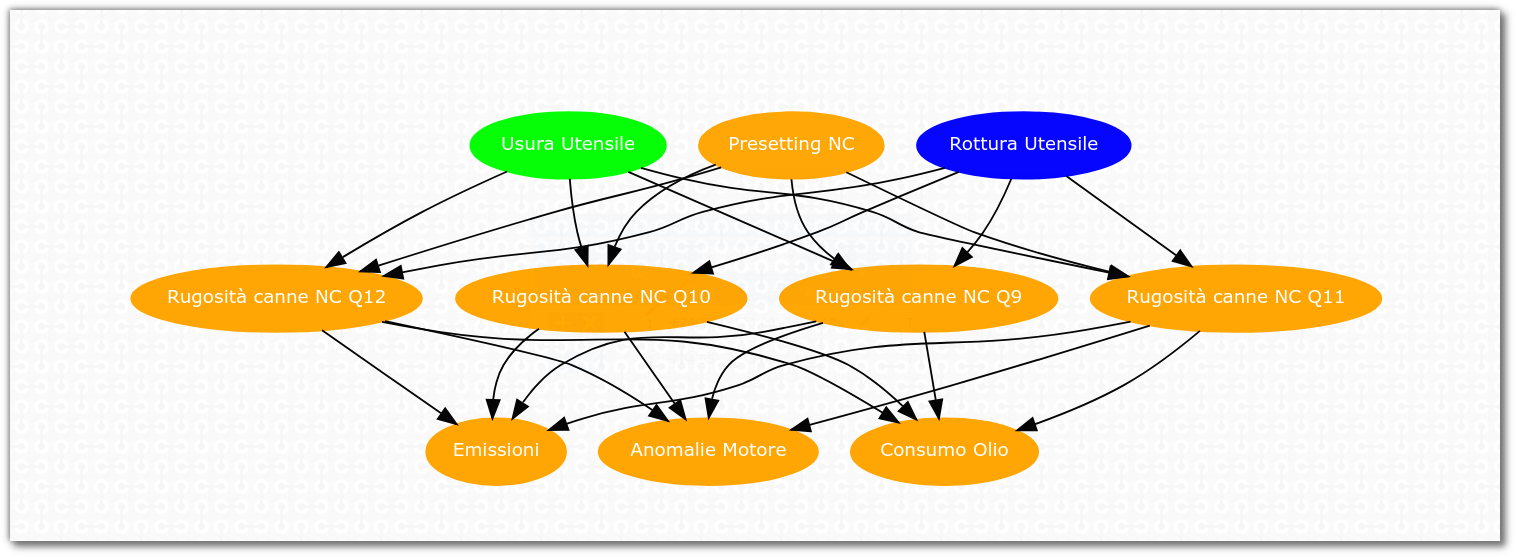

The bayesian network

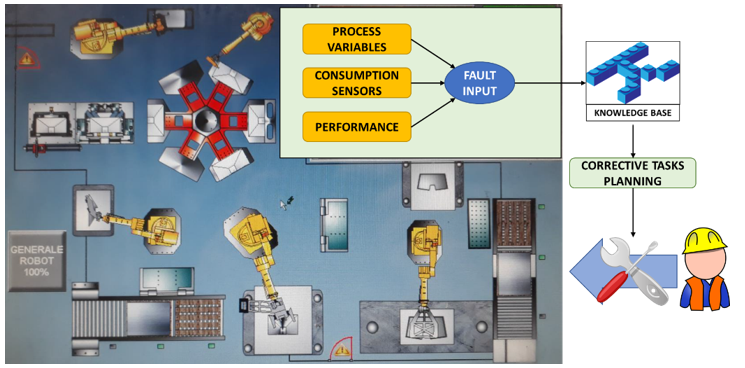

The first step to implement an A.I.O.C.A.P. system is to create a probabilistic knowledge base.

Starting from that base (organized in a Bayesian Network) the system will propose some solutions named action plans.

The bayesian network will provide a grade of probabilistic credence of the current state of the process, after an event tied to one or more quality problems and automatically detected by the system.

The logic of this System

The logic of this System expects it to take decisions by combining utility and probability concepts.

Once the knowledge base has been established it’ll be tested in two particular situations:

- During the quality control (sample examination by the operator)

- In case of detected scraps (an Andon system)

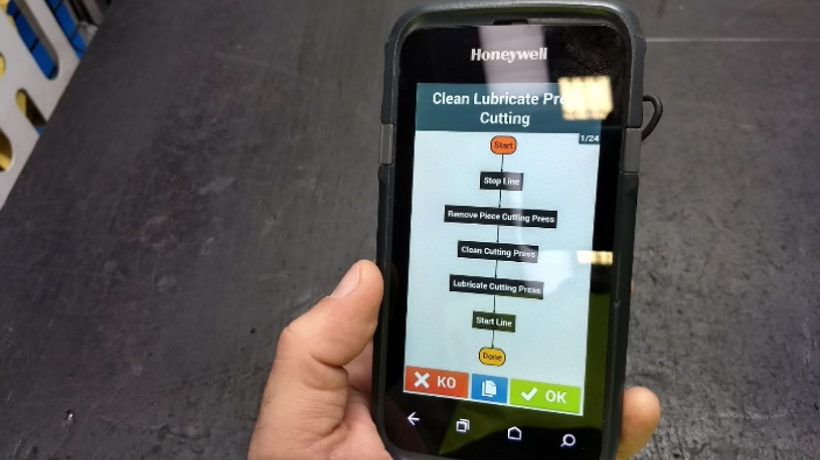

In both cases the operator (equipped with a mobile device) will receive a notification, visualizing the more probable causes of that anomaly. At this point the operator will be able to check the action sequence elaborated by the smart agent.

The operator will then have to verify that one of the proposed action plans is correct which means that it solves the problem.

Once the problem has been solved the operator will have to give confirmation to the system which means that he’ll strengthen that specific solution for that specific problem.

Case study

This project led to a case study that has been the base of a scientific publication.

The same publication won the award of “second best paper” by the university of Cartagena, with the object “Manufacturing and industrial process design”.

The paper title is “A decision theory approach to support action plans in cooker hoods manufacturing”.

Obtained Results

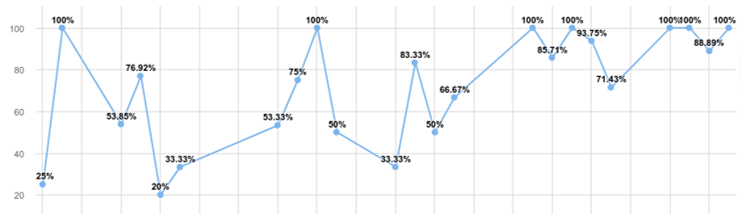

The logic of reinforcement learning means that the system, starting from the knowledge base built by experts, gradually becomes better and better.

From the following graphs, related to the use of this system at the plant of one of our clients, it can be seen that, after about a year from the beginning of the learning process, an average of 95% success was recorded at the first attempt.

The first part of the graph shows a learning trend.. Even if in a “turbulent” way, the system reaches excellent results in a few months of work.

The added value of the system is confirmed by the support given to new operators, as they are not experts in the production process of the line. Knowledge about the process is provided by the system, after learning it from the more experienced operators.

“One day the machines will be able to solve all the problems but they’ll never be able to propose one.”

Ready to… learn more?

© 2023 NeXT Srl Unipersonale - P.IVa. 02510420421 - Privacy and cookie information - Powered by Fuel31